Vector Clocks can be used to build a knowledge of the network surrounding each node, from the local perspective of that node. This means that it would be possible to calculate some metrics about the global network, at each individual node. However, we already know [1] that in human networks, the 6 hour degree approximates the betweeness centrality of the node, which can be used as a useful metric for routing [2], so why should we complicate things further?

Benefits of vector clocks

Maintaining a vector clock [3] for each node encountered can give us extra information about the network. Assuming in our vector clock updates, we also transmit information about the network as seen by each node, we can tell both the structure of the network, and a number of extra things about the network that simply counting degree cannot.

Each node can know how out of date other nodes are, simply by checking it’s records, and seeing when an update was last received about that node, this gives some notion of distance from itself to other nodes (a.k.a. ball of radius, latency).

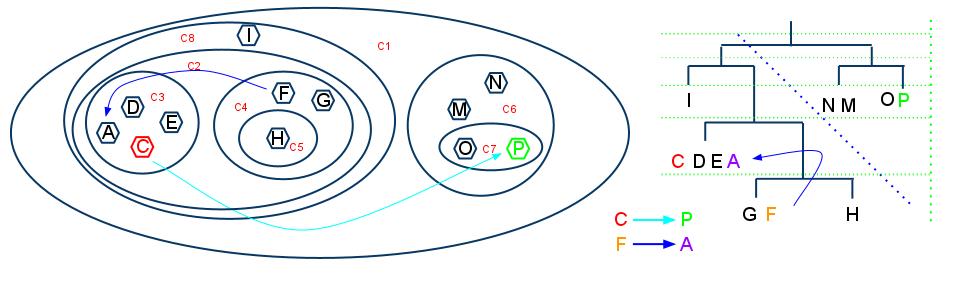

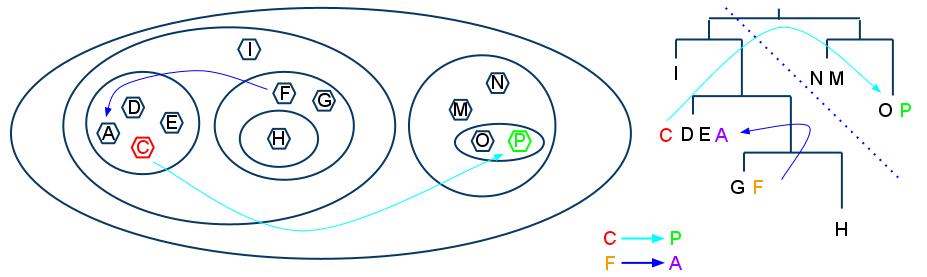

Indirect paths and essential links can be deduced at a global level by looking at the routes that are used to update vector clocks; where an update is received about an unknown node during an encounter, we can mark the encountered node as a possible carrier for the unknown node. And where one node is always used as a conduit for information updates about certain nodes, we know that it connects some other portion of the network.

The rate that other nodes update us with information (update rate) gives us a notion of how often contact is made with that node, the update amount, tells us how much knowledge about the network that node delivers . A node that has a high update rate is one that is encountered often, and one that has a high update amount may be well connected to other portions of the network.

Derived knowledge

Using these metrics, we can start to make other observations about the network. We now have sufficient information to attempt to predict future events [4][5]; Using the knowledge of update rate and out-of-dateness We may anticipate future encounters, based on the notion of periodicity [1], or perhaps even by simple Markov chains [6].

We can also try to calculate a notion of distance from one node to another using the update amount, out-of-dateness and out knowledge of the network structure. A nodes knowledge of the network also allows it to calculate things like it’s own centrality, degree and community membership, as well as giving it hints as to the same metrics for other nodes.

Vector clocks for location

Extending the idea further, it would be possible to share information about node movements along with network structure. Assuming nodes could detect their locations using some globally known scheme (e.g. simply using a grid-based approach to specifying location based on Latitude/Longitude), the vector clock updates can pass along updates about the last time a node visited a location, and perhaps the duration of the visit. This, combined with updates from other other nodes about their movements can give each node a picture of movements of other nodes. This in turn would allow us to make predictions[4][5] about where nodes will be in the future.

Usage

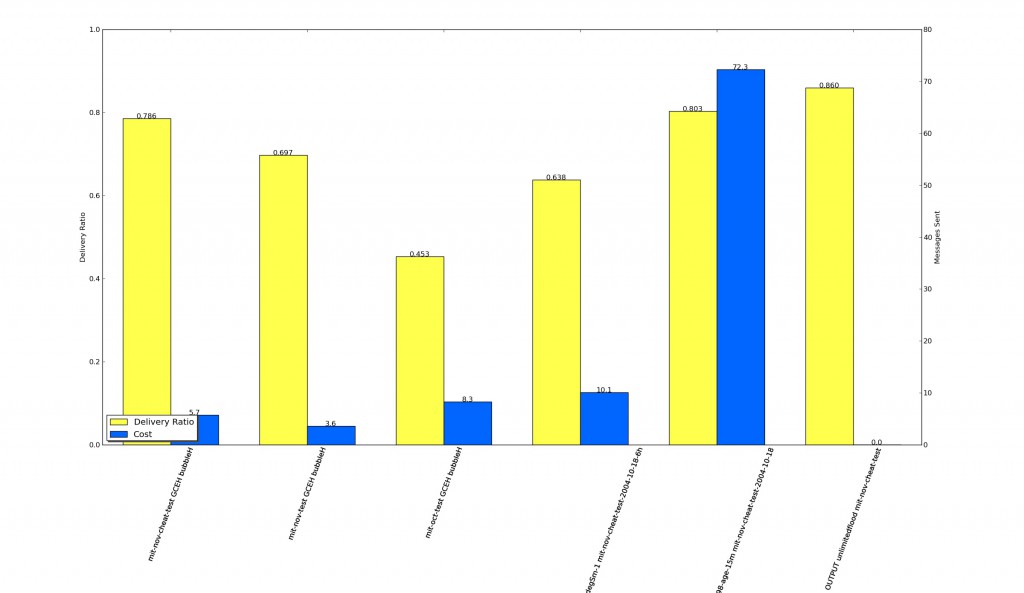

The combination of these metrics, gives us rich information to provide to a routing scheme, for example, it may be possible to adapt CAR [7] to use these metrics in it’s calculations, or a hybrid approach to BubbleRAP [2], where the global and/or local rank are based on these metrics. We may also want to devise our own scheme for routing based on this specific knowledge, however there are a large number of schemes already proposed, and it would seem sensible to improve an existing scheme, rather than create yet another one.

Refs

1. Williamson G, Cellai D, Dobson S, Nixon P. Self-management of Routing on Human Proximity Networks Spyropoulos T, Hummel KA, eds. 2009;5918:1-12. Available at: http://www.springerlink.com/index/10.1007/978-3-642-10865-5 [Accessed July 7, 2010].

2. Hui P, Crowcroft J, Yoneki E. BUBBLE Rap: Social-based Forwarding in Delay Tolerant Networks. Networks. 2008:241-250. Available at: http://portal.acm.org/citation.cfm?doid=1374618.1374652.

3. Kossinets G, Kleinberg J, Watts D. The structure of information pathways in a social communication network. In: Proceeding of the 14th ACM SIGKDD international conference on Knowledge discovery and data mining – KDD ’08. New York, New York, USA: ACM Press; 2008:435. Available at: http://portal.acm.org/citation.cfm?doid=1401890.1401945.

4. Song C, Qu Z, Blumm N, Barabási A-L. Limits of predictability in human mobility. Science (New York, N.Y.). 2010;327(5968):1018-21. Available at: http://www.ncbi.nlm.nih.gov/pubmed/20167789.

5. Ashbrook D, Starner T. Using GPS to learn significant locations and predict movement across multiple users. Personal and Ubiquitous Computing. 2003;7(5):275-286. Available at: http://www.springerlink.com/openurl.asp?genre=article&id=doi:10.1007/s00779-003-0240-0 [Accessed July 31, 2010].

6. Musolesi M, Piraccini M, Fodor K, Corradi A, A. Supporting Energy-Efficient Uploading Strategies for Continuous Sensing Applications on Mobile Phones. Pervasive. 2010. Available at: http://www.springerlink.com/index/WH71427029706513.pdf [Accessed September 3, 2010].

7. Musolesi M, Mascolo C. CAR: Context-Aware Adaptive Routing for Delay-Tolerant Mobile Networks. IEEE Transactions on Mobile Computing. 2009;8(2):246-260. Available at: http://ieeexplore.ieee.org/lpdocs/epic03/wrapper.htm?arnumber=4585387.